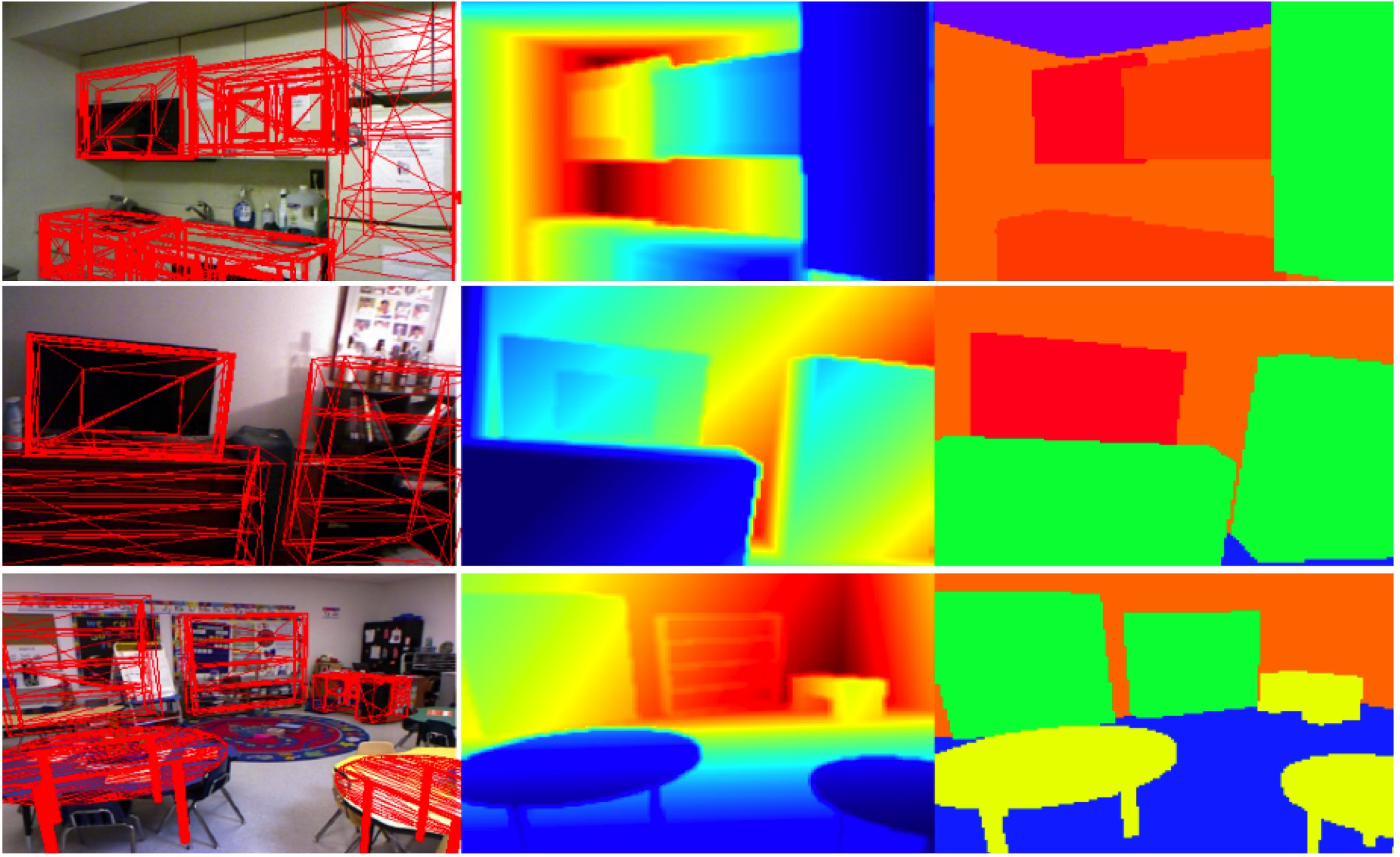

Object recognition, scene layout analysis, 3D reconstruction, and motion estimation can all work together synergistically to provide an understanding of the visual world. Here RGB-D imagery is interpreted by fitting 3D CAD object models to the scene [ ].

Object and scene understanding involves figuring out, at the very least, what is in an image and where things are. Moreover we want to know information about the scene and how objects in it are spatially related. The dominant paradigms treat this as primarily a pattern recognition problem that involves learning some filter-based representation of images that makes the detection and classification problem easier. In contrast, our work on recognition often brings in 3D knowledge about objects in a variety of ways.

Our work addresses:

- object modeling

- object detection

- object recognition

- scene understanding

- scene segmentation

- humans interacting with objects

- machine learning methods

- statistical modeling of scene properties

- geometric models and reasoning

Scene understanding, in contrast to object recognition, attempts to analyze objects in context with respect to the 3D structure of the scene, its layout, and the spatial, functional, and semantic relationships between objects. Our research in this area combines object detection/recognition with 3D reconstruction and spatial reasoning. We believe that the integrated analysis of low-level image features, together with high-level semantic and 3D object models, will enable robust scene understanding in complex and ambiguous environments and will provide the foundation for further reasoning.