Capturing and Animation of Body and Clothing from Monocular Video

2022

Conference Paper

ps

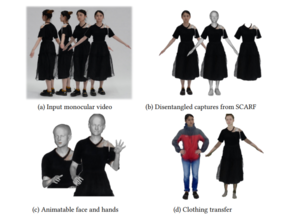

We propose SCARF (Segmented Clothed Avatar Radiance Field), a hybrid model combining a mesh-based body with a neural radiance field. Integrating the mesh into the volumetric rendering in combination with a differentiable rasterizer enables us to optimize SCARF directly from monocular videos, without any 3D supervision. The hybrid modeling enables SCARF to (i) animate the clothed body avatar by changing body poses (including hand articulation and facial expressions), (ii) synthesize novel views of the avatar, and (iii) transfer clothing between avatars in virtual try-on applications. We demonstrate that SCARF reconstructs clothing with higher visual quality than existing methods, that the clothing deforms with changing body pose and body shape, and that clothing can be successfully transferred between avatars of different subjects.

| Author(s): | Yao Feng and Jinlong Yang and Marc Pollefeys and Michael J. Black and Timo Bolkart |

| Book Title: | SIGGRAPH Asia 2022 Conference Papers |

| Pages: | 1--9 |

| Year: | 2022 |

| Month: | December |

| Series: | SA'22 |

| Department(s): | Perceiving Systems |

| Bibtex Type: | Conference Paper (inproceedings) |

| Paper Type: | Conference |

| DOI: | 10.1145/3550469.3555423 |

| Event Place: | Daegu, Republic of Korea |

| Article Number: | 45 |

| State: | Published |

| Links: |

project

code |

|

BibTex @inproceedings{Feng2022scarf,

title = {Capturing and Animation of Body and Clothing from Monocular Video},

author = {Feng, Yao and Yang, Jinlong and Pollefeys, Marc and Black, Michael J. and Bolkart, Timo},

booktitle = {SIGGRAPH Asia 2022 Conference Papers},

pages = {1--9},

series = {SA'22},

month = dec,

year = {2022},

doi = {10.1145/3550469.3555423},

month_numeric = {12}

}

|

|