Dyna: 4D meshes of dynamic human soft tissue motion

2015-08-15

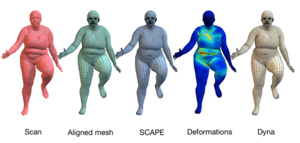

To look human, digital full-body avatars need to have soft tissue deformations like those of real people. Current methods for physics simulation of soft tissue lack realism, are computationally expensive, or are hard to tune. Learning soft tissue motion from example, however, has been limited by the lack of dense, high-resolution, training data. We address this using a 4D capture system and a method for accurately registering 3D scans across time to a template mesh. Using over 40,000 scans of ten subjects, we compute how soft tissue motion causes mesh triangles to deform relative to a base 3D body model and learn a low-dimensional linear subspace approximating this soft-tissue deformation. This dataset contains all 40,000 training meshes which have the same mesh topology. See the Dynamic FAUST dataset for the raw scans and improved registered meshes.

| Author(s): | Pons-Moll, Gerard and Romero, Javier and Mahmood, Naureen and Black, Michael J. |

| Department(s): |

Perceiving Systems |

| Authors: | Pons-Moll, Gerard and Romero, Javier and Mahmood, Naureen and Black, Michael J. |

| Release Date: | 2015-08-15 |

| Copyright: | Max-Planck-Gesellschaft zur Förderung der Wissenschaften e.V. |

| External Link: | http://dyna.is.tue.mpg.de/ |