FlowCap: 2D Human Pose from Optical Flow

2015

Conference Paper

ps

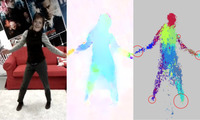

We estimate 2D human pose from video using only optical flow. The key insight is that dense optical flow can provide information about 2D body pose. Like range data, flow is largely invariant to appearance but unlike depth it can be directly computed from monocular video. We demonstrate that body parts can be detected from dense flow using the same random forest approach used by the Microsoft Kinect. Unlike range data, however, when people stop moving, there is no optical flow and they effectively disappear. To address this, our FlowCap method uses a Kalman filter to propagate body part positions and ve- locities over time and a regression method to predict 2D body pose from part centers. No range sensor is required and FlowCap estimates 2D human pose from monocular video sources containing human motion. Such sources include hand-held phone cameras and archival television video. We demonstrate 2D body pose estimation in a range of scenarios and show that the method works with real-time optical flow. The results suggest that optical flow shares invariances with range data that, when complemented with tracking, make it valuable for pose estimation.

| Author(s): | Romero, Javier and Loper, Matthew and Black, Michael J. |

| Book Title: | Pattern Recognition, Proc. 37th German Conference on Pattern Recognition (GCPR) |

| Volume: | LNCS 9358 |

| Pages: | 412--423 |

| Year: | 2015 |

| Publisher: | Springer |

| Department(s): | Perceiving Systems |

| Research Project(s): |

2D Pose from Optical Flow

FlowCap |

| Bibtex Type: | Conference Paper (inproceedings) |

| Paper Type: | Conference |

| Event Name: | GCPR 2015 |

| Event Place: | Aachen |

| Links: |

video

|

| Video: | |

| Attachments: |

pdf preprint

|

|

BibTex @inproceedings{romero:gcpr:2015,

title = {{FlowCap}: {2D} Human Pose from Optical Flow},

author = {Romero, Javier and Loper, Matthew and Black, Michael J.},

booktitle = {Pattern Recognition, Proc. 37th German Conference on Pattern Recognition (GCPR)},

volume = {LNCS 9358},

pages = {412--423},

publisher = {Springer},

year = {2015},

doi = {}

}

|

|